UPDATE (November 09, 2021)

This Project is no longer maintained here. This project’s work has been moved to the W3C and has been taken up in a Community Group. For more information please visit the new Accessibility Discoverability Vocabulary For Schema.org Community Group.

To review or report issues with the Schema.org Accessibility Properties for Discoverability Vocabulary, please refer to the vocabulary’s GitHub issue tracker.

Original Report (Outdated)

Submitted by Madeleine Rothberg, NCAM, WGBH

As the Accessibility Metadata Project funding with the Bill & Melinda Gates Foundation has concluded, we wish to thank them very much for their support of our work on the discoverability of accessible educational resources, and for their recognition of the importance of accessibility in furthering inclusive education.

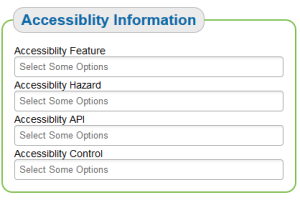

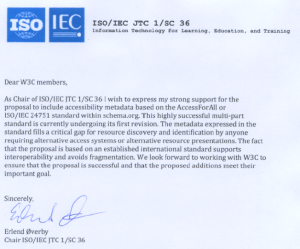

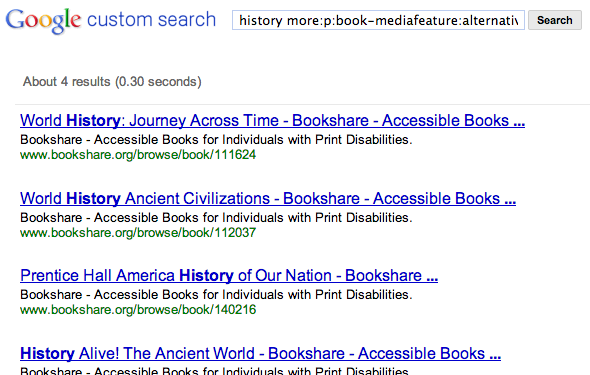

Thanks to the Gates funding, we have made tremendous strides in our goals to develop standards for accessibility metadata and to have these standards accepted by Schema.org, the organization that keeps a list of agreed-upon tags that all search engines can use in common so that users of those search engines can refine their searches to find exactly what they are looking for. Now that the standard set of Schema.org tagging of online educational resources’ properties includes accessibility metadata, these properties have been picked up by the Internet Archive’s Open Library initiative, Hathi Trust Digital Library, and the Learning Registry, a leading metadata aggregation platform about online learning resources. We have also added accessibility metadata tags both to Bookshare and to a payload of metadata submitted to the Learning Registry. Bookshare now automatically submits accessibility metadata for Bookshare titles in the registry. Because of our reference implementation, Bookshare’s accessible content is more easily discoverable via online search and others are able to better understand how to make their content include accessibility metadata.

Beyond our grant commitment, we developed additional reference implementations and tools:

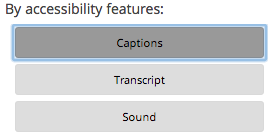

- Searching for videos with closed captions: Before this project, it was not possible to search for captioned videos beyond the YouTube domain. By collaborating with the creator of the “WP YouTube Lyte” plug-in, which allows WordPress site administrators to automatically add accessibility properties to videos that have closed captions, we contributed code that allows for search based on accessibility. Now people who need captioned videos can easily find them on all WordPress sites using the plug-in using tools, such as Google’s Custom Search Engine.

- Described video tagging: Smith-Kettlewell Eye Research Institute has developed a web-based video description product called YouDescribe that enables anyone to describe YouTube videos on the web. To assist people with visual impairments to easily discover videos described with the YouDescribe platform, Smith-Kettlewell is automatically tagging their videos with accessibility properties. Now search engines such as Google Custom Search Engine can index those properties.

The adoption of our project’s proposed set of accessibility metadata tags and the implementation successes I just listed are a tremendous milestone in the collaborative journey towards our vision of a “Born Accessible” world: a world in which all content born digital is made accessible—and discoverable—from the outset. Our implementations demonstrate that a broad adoption of accessibility metadata is possible.

Now that this groundwork has been laid, what is next? We must now encourage content management systems, publishers, search engines, and sites like Wikimedia to start using Schema.org metadata in their sites, so that one day everyone will be able to find the great accessible content that is out there. And, we have elements that we would still love to add – see the “properties under consideration” section of the specification. If you are interested in seeing this work progress, and would like to discuss your ideas for projects and next steps, please contact us by submitting a contact request in the project update sign-up form on this page.